By Khan Academy

In March 2023, we introduced Khanmigo, a groundbreaking AI-powered learning tool designed to assist students and educators. At the same time, we also shared our initial approach to the responsible development of AI, a set of guidelines that helped us responsibly adapt AI for the classroom. We are proud that our work was recognized by the independent Common Sense AI Ratings. Since then, we’ve further developed our AI guidelines and supporting processes, and today, we are excited to share an update.

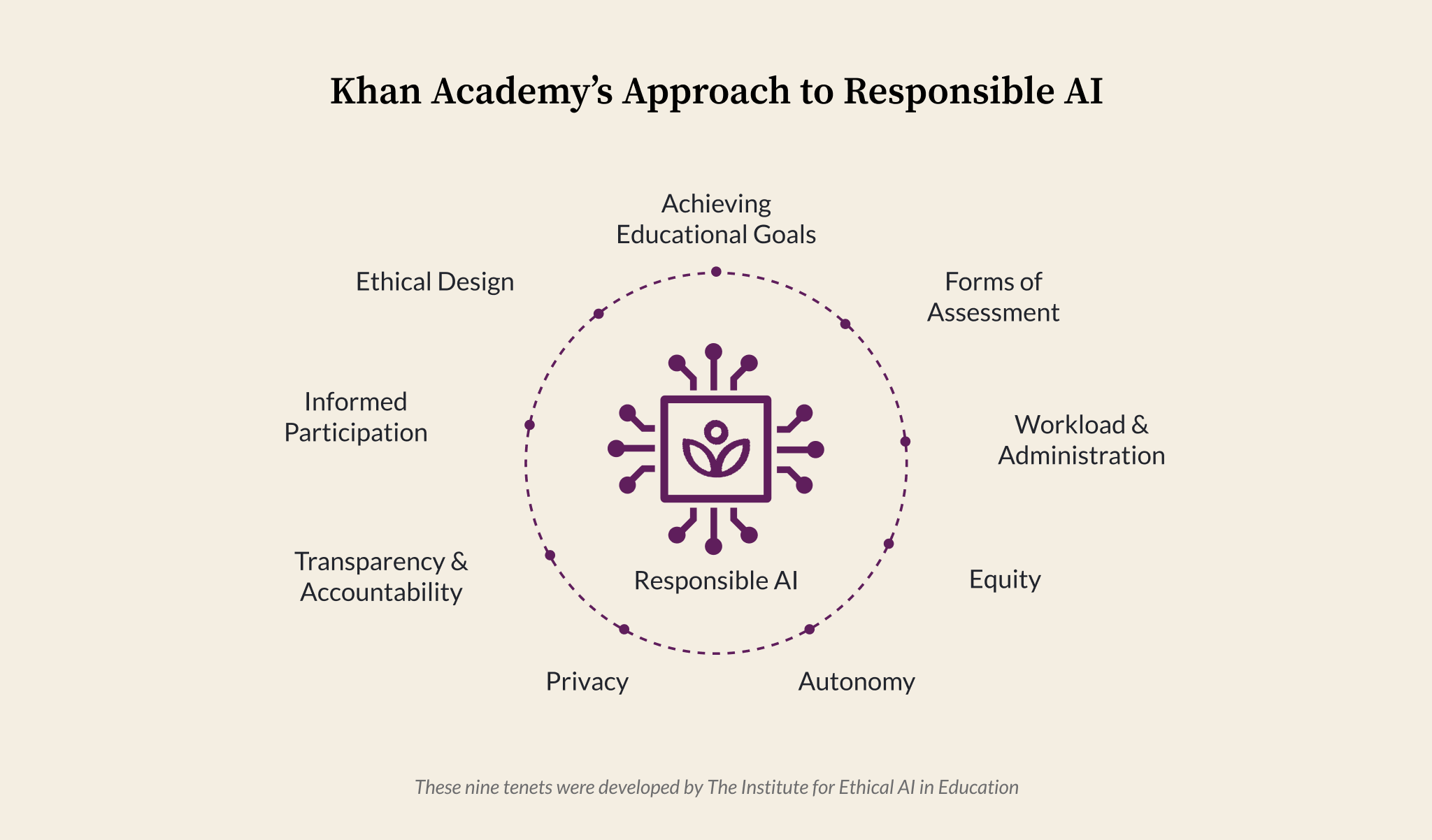

As a starting point, our team adopted the nine foundational tenets from The Ethical Framework for AI in Education developed by The Institute for Ethical AI in Education, with a few minor edits for clarity and relevance to our specific purpose. These provide a good list of tenets, as follows:

1. Achieving Educational Goals – AI should advance well-defined educational objectives grounded in societal, educational, or scientific evidence that benefit learners.

2. Forms of Assessment – AI should broaden the scope of learner talents it assesses and recognizes.

3. Administration & Workload – For educator-specific tools, AI should enhance institutional efficiency while preserving human relationships.

4. Equity – AI systems must promote equity and avoid discrimination across different learner groups.

5. Learner Autonomy – AI should empower learners to take greater control of their learning and development.

6. Privacy – A balance should be struck between privacy and the legitimate use of data for achieving well-defined and desirable educational goals

7. Transparency & Accountability – Humans are ultimately responsible for educational outcomes and must oversee AI operations effectively.

8. Informed Participation – Learners, educators, and practitioners should understand AI’s implications to make informed decisions.

9. Ethical Design – AI tools should be developed by individuals who understand their impact on education.

These are a great starting point, but they don’t tell us what we should do about them as developers of AI tools. We found that the Artificial Intelligence Risk Management Framework published by the National Institute of Standards and Technology helped us think about how to evaluate and manage potential risk from AI. So, we combined the two into the framework below.

Today, we are excited to share our Responsible AI Framework, its core principles, and how we integrate it into our product development processes to create meaningful, safe, and ethical learning experiences.

Refining the Framework

The Institute for Ethical AI in Education developed a set of criteria and a procurement checklist for each of the AI tenets. We adapted the criteria to create guardrails for product development to further clarify how to apply the tenets to our work. For example, for the tenet Achieving Educational Goals, we have the following:

Tenet 1: Achieving Educational Goals

1.1 There is a clear educational goal that can be achieved through the use of AI & AI is capable of achieving the desired objectives and impacts.

1.2 There is a monitoring & evaluation plan to determine the impact expected through the use of AI and verify that the AI is performing as intended.

1.3 We have the means to determine AI’s trustworthiness, and in areas where the AI is not trustworthy, there is an action plan to address improvement related to the design of the AI, the implementation, and/or other factors.

1.4 There are mechanisms in place to prevent non-educational uses of the AI.

We similarly developed guardrails for each of the other eight tenets. You can see all of the guardrails associated with the tenets.

Applying the Framework to Feature Development

When considering an AI-powered feature, we can use this framework to identify risks and then walk through a process of risk rating and mitigation. For each risk identified, we rate the probability of the risk (low, medium, or high) and the impact of the risk (low, medium, or high). Note that these are our internal definitions of risk; what we consider high risk is actually low risk when compared to the risks outlined in regulatory systems.

For example, when we initially launched Khanmigo, we went through each guardrail and identified risks. For guardrail 1.4, “There are mechanisms in place to prevent non-educational uses of the AI,” we identified a safety risk resulting from inappropriate or harmful uses. We rated this risk as high in both likelihood and impact if not addressed. Now, we didn’t have any direct evidence to support these ratings because we were launching a tool that hadn’t been tried before: a tutor where students would have conversations with a generative AI model. However, we could draw on what we had seen in our discussion spaces and our community support teams’ expertise in other online spaces to make an estimate. We now had a spreadsheet that contained many rows that each looked like this:

| Tenet | Guardrail | Risk | Likelihood | Impact |

| 1. Achieving Educational Goals | 1.4 There are mechanisms in place to prevent non-educational uses of the AI. | Safety risk – Inappropriate or harmful uses | High | High |

We then used the likelihood and impact ratings to identify the risks that were highly likely to occur and highly likely to have a large impact. For each of those, we identified and implemented mitigation strategies to lower the risk.

| Guardrail | Risk | Likelihood | Impact | Mitigation |

| 1.4 There are mechanisms in place to prevent non-educational uses of the AI. | Safety risk – Inappropriate or harmful uses | High | High | –Moderation API to identify responses that may be inappropriate, harmful, or unsafe -AI responds appropriately to flagged chats and also directs users to the community standards FAQ -When the moderation system is triggered, it automatically sends an email and notification to an adult connected to the child’s account -Community Support is involved in creating and maintaining the moderation protocols -Disable an account (if needed) when a message is flagged -Chat transcripts of all chat interactions are viewable by teachers and parents -“Red-teaming” to deliberately try to “break” or find flaws in the AI to uncover potential vulnerabilities, making the system stronger and more secure in the long run. -Terms of Service & in-product messaging that make it clear our platform is intended for educational uses, making it a violation to attempt to jailbreak or similarly guide the bot into conversations for non-educational reasons. |

At our launch in March of 2023, our best estimate was that the mitigations would be enough to make the likelihood and impact of safety issues drop to medium. This has turned out to be the case. Most inappropriate uses could be classified as kids testing the limits of the system. When they run up against the flags and the conversation stops, they quickly move on.

We continue to use our framework for responsible AI in education to identify risks for new features, such as our teacher tools and Writing Coach. The framework helps us determine if a particular feature has a risk related to the guardrails and rate it, which allows us to focus our attention on the riskiest areas of the feature.

Implementing the Framework Consistently

A framework is only meaningful when consistently applied. At Khan Academy, we integrate Responsible AI into our product development process through:

1. Responsible AI Steering Group

A leadership team representing Product, Data, and User Research facilitates strategic alignment and oversight.

2. Responsible AI Extended Working Group

A cross-functional team evaluates upcoming capabilities against the framework and monitors launched features.

3. Integration into Product Design

We assess features during the design phase to align with our Responsible AI Framework, as well as continuous evaluation of the features (as they evolve) through demos and other feedback loops.

4. Continuous Communication and Evaluation

We gather qualitative and quantitative insights, track industry trends, and engage with stakeholders (internal and external) to refine our approach.

Looking Ahead

As AI continues to evolve rapidly, we remain steadfast in our commitment to leveraging it responsibly.

Together, we believe that by upholding these principles, we can harness AI to enhance student learning and empower learners and teachers worldwide.

We look forward to hearing how your organization is implementing AI in a responsible way. Onwards!